You can also see the log of the execution in the lower window. When you click on the job it shows whether it is still executing or if it’s stopped. Once you finish the wizard, you will see a new export job added in the DBA window. >sqlplus /nolog SQL> connect SYSTEM/fyicenter SQL> COL. In my example, I set it to be ~2GB for each file. The tutorial exercise below tells you find what is your default dump directory and locate the dump file. You can control how large each of the export files can be. This will happen if the export is large in size. This tells the wizard to use a numeric increment on the suffix of the file name, in case I end up with an export that consists of more than one file. Notice that my File Name in the following screenshot ends with %U. In the following example, the name of the DIRECTORY is DP_FROM_ONPREM:ĬREATE DIRECTORY DP_FROM_ONPREM AS ‘/u01/app/oracle/admin/ORCL/dpdump/files’ Use the following SQL command to create a directory if you don’t already have one. This directory needs to be created before you continue, in case you don’t already have a directory setup with the proper permissions to export files to. On the following screen, select the DIRECTORY that will contain the output dump files. Since my aim here is to later import into ADW, I kept it to the default value of Compatible. If you are going to import later into a lower version Oracle DB, you want to set the version number to that of the target database. 526 catalog.sql script, 382 catproc.sql script, 382 catrep.sql script, 382 CBT. Note the VERSION dropdown in the following screen. background dump ( bdump ) files, 74 background of DBAS ( database. The following type of objects are recommended to be excluded for ADW purposes: Index, Index Type, Cluster, Materialized View, Materialized View Log, Materialized Zonemap, and DB Link. For example, performance is likely better without creating indexes on ADW, and that is why we exclude them from the export. If the purpose of the export is to later import the data dump into ADW, it is recommended to exclude certain database objects that are not necessary on ADW. We can export one or multiple schemas at the same time by selecting them. This allows me to merge several schemas from different source databases into the same target ADW. In this example, I chose to do a Schema export.

There are a few different types of data pump exports. Right click and select the Run Data Export Wizard. Then under that connection, navigate down to Data Pump -> Export Jobs. You should be able to call those packages directly, so I would start there. But if you really wanted to use that functionality from within SQL Developer (or SQL Plus), Data Pump uses the built-in DBMSDATAPUMP and DBMSMETADATA packages.

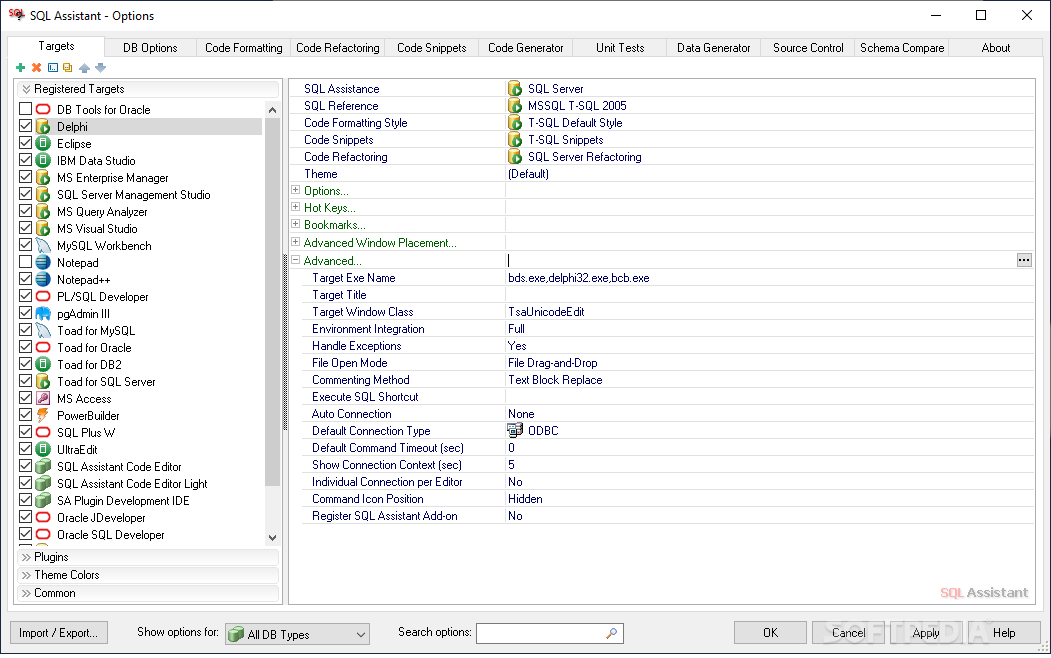

Add the connection to the source database to export from. 5 As far as I know, Import/Export and Data Pump are command-line-only types of tools. In order to access the Data Pump export Wizard, first display the DBA window from the View menu. In this post, I use SQL Developer to run the Data Export Wizard. These dump files can then be imported into another Oracle DB or Autonomous Data Warehouse (ADW).

ORACLE SQL DEVELOPER DUMP HOW TO

In this blog post I demonstrate how to export Oracle Database schemas into dump files. Hope Gary's answer will guide you to some extent.Guide to Oracle Cloud: 5 Steps to Ensure a Successful Move to the CloudĮxplore key considerations, integrating the cloud with legacy applications and challenges of current cloud implementations. All rows were included.Īnd as the summary, it suggested that the SQL developer is not the best tool to open a large size of data file. I just tried your scenario on at 55000 row table that produced an export.sql of about 20MB.

ORACLE SQL DEVELOPER DUMP FULL

And if you don't want to open files automatically in order to suppress the warning dialog, use Tools|Preferences|Database|Export/View DDL Options and un-check the "Open Sql File When Exported" box.Īre you certain the export file does not contain all the insert rows? That would be a bug unless you hit an OutOfMemory or disk full condition. For those huge files, please use an external editor. To view the file from within SQL Developer despite this limitation, just use the File|Open menu. By limiting this, we nip in the bud any potential complaints of Java OutOfMemory upon trying to open a huge file. It appears that the "maximum automatic open size" is hard-coded to a value of 500000 (bytes, I believe) with no way to override it.

Found the below answer from a SQL Developer forum :